The Today and Now: Deep Learning and the way it works

Machine Learning. Deep Learning. Artificial Intelligence- I'm sure you've heard these buzzwords one too many times. Be it in a workplace or on the news, the ability for machines to supposedly learn has been a thing of the future for the longest time - but now it's officially here.

Before we dive in any deeper, I should probably clear the air. Machine learning is really not that new. "In the 1950s, British mathematician Alan Turing proposed his artificially intelligent "learning machine" (Cosmos, "What is Deep Learning and How Does it Work?")." The truth is that machine learning is nothing more than mathematical, algorithmic, and statistical analysis, hiding behind the facade that is a plethora of computing libraries and systems.

Without pushing too far into the nitty-gritty of Machine Learning, one can generally argue that machine learning is composed of three main "problems:" Prediction, Classification, and Clustering. Most of the time, we are trying to make decisions using data. Machine learning gives us a mathematical backing and while there's more within this field, I'll abstract that for now, and leave you to search through the excess there is to learn about it.

Now that we know what machine learning is, we can proceed to deep learning. I'm sure you've heard of Tesla. You must often wonder - how do Tesla's go on autopilot, making driving decisions on their own? Well, as odd as it seems, deep learning is responsible for a good bit of that.

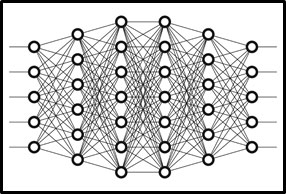

Deep learning is a subset of machine learning - a field that examines computer algorithms that learn and improve on their own. One of these algorithms is neural networks (Cosmos, "What is Deep Learning and How Does it Work?"). Inspired by the nerve cells (neurons) that make up the human brain, neural networks comprise of different layers (neurons) that are interconnected- the more layers, the deeper the network.

In the brain, a neuron is a cell. It's a cell that receives a certain amount of energy and will either exert an excitatory or inhibitory reaction to its adjacent neuron. If the energy received is high enough, the next neuron will do the same. It's in this framework that our minds send signals - and it's the way we create compound thoughts that make our world what it is!

In an artificial neural network, signals also travel between 'neurons '. But instead of firing an electrical signal, a neural network assigns weights (or numerical values between 0 and 1) to various neurons. A neuron weighted more heavily than another will exert more of an effect on the next layer of neurons. The final layer puts together these weighted inputs to come up with an answer.

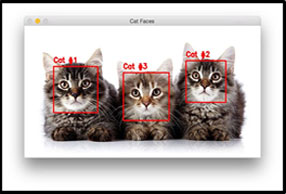

All decisions that we make work in a similar way. Let's consider the issue of looking at a cat and trying to identify whether it is a cat. To you and me, this is a simple, almost naive task. But let's say I told you to program your device to do that. I give you a camera, a nice computer, and all the time in the world. Well, some of the world's greatest minds had to butt heads for a while before we were able to make that happen. That butting has today led to things like these Neural Networks.

Consider a cat. Cats have similar features, but a picture of a cat could have numerous variables: lighting, shade, size, depth, color, the list goes on! If you've coded in the past, you know that no number of "if conditionals" are going to solve that for you! So, let's think about how Neural Networks go about this.

The first essential part of this is data, labeled data, to be more specific. I need to show the neural network enough photos of cats (that are specifically labeled cats) and photos of things that are not cats (and labeled as such). Once this training process has occurred, the network begins to change the weight of each neuron such that it fits the training data. It will make numerical adjustments to maximize the accuracy of identification (Cosmos, "What is Deep Learning and How Does it Work?"). At the end, you can feed it an image and expect a simple output - cat or no cat! This is called supervised learning. Unsupervised learning takes in unlabeled data, so the network has to figure out patterns for itself! That is more complicated and nuanced, and I'll leave you to read more about that.

But now that we understand what these networks are and how they work, why do they work? More generally, why does any deep learning method work? Here's the catch - we don't completely understand. You must then ask: how can you make something and not know why it works? Well, we understand its results. It has an ability to generalize problems incredibly and is often quick in doing so, that you would expect it to work in a variety of situations! Unfortunately, even the best of mathematicians today working behind the scenes are still arguing why and how these deep learning methods work - and why they're so incredible at doing what they do (Medium, "Modern Theory of Deep Learning: Why Does It Work So Well").