Is there a Data Science "Hype Bubble"?

The noise around AI, data science, machine learning, and deep learning, is reaching a fever pitch. As this noise has grown, our industry has experienced a divergence in what people mean when they say "AI", "machine learning", or "data science". It can be argued that our industry lacks a common taxonomy. If there is taxonomy, then we, as data science professionals, have not done a very good.

job of adhering to it. This has consequences- two consequences:

- The creation of a hype-bubble that leads to unrealistic expectations.

- Increasing inability to communicate, especially with non-data science colleagues.

Concise Definitions

- Data Science: A discipline that uses code and data to build models that are put into production to generate predictions and explanations.

- Machine Learning: A class of algorithms or techniques for automatically capturing complex data patterns in the form of a model.

- Deep Learning: A class of machine learning algorithms that uses neural networks with more than one hidden layer.

- AI: A category of systems that operate in a way that is comparable to humans in the degree of autonomy and scope.

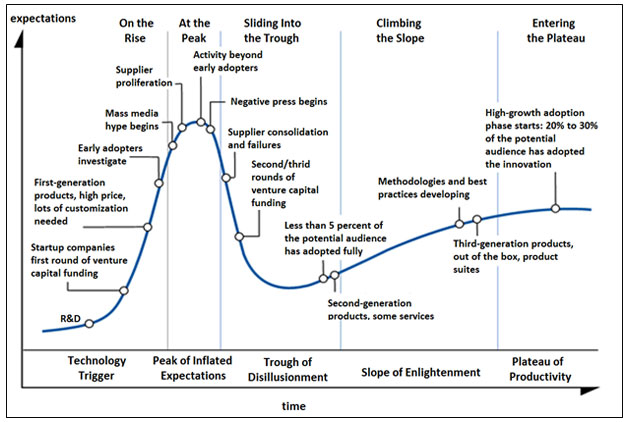

Since 2012, the data science industry has moved extremely quickly. It’s gone through almost every stage in the Gartner hype cycle.

Hype

Our terms have a lot of star power. They inspire people to dream and imagine a better world which leads to their overuse. More buzz around our industry raises the tide that lifts all boats, right? Sure, we all hope the tide will continue to rise. But, we should work for a sustainable rise and avoid a hype bubble that will create widespread disillusionment if it bursts.

The frequent overuse of "AI" when referring to any solution that makes any kind of prediction has been a major cause of this hype. Because of frequent overuse, people instinctively associate data science projects with near perfect human-like autonomous solutions. Or, at a minimum, people perceive that data science can easily solve their specific predictive need, without any regard to whether their organizational data will support such a model.

Data Science

It can be difficult to craft a definition for data science while, at the same time, distinguishing it from statistical analysis.

Statistical analysis is based on samples, controlled experiments, probabilities, and distributions. It usually answers questions about the likelihood of events or the validity of statements. It uses different algorithms like t-test, chi-square, ANOVA, DOE, response surface designs, etc. These algorithms sometimes build models too. For example, response surface designs are techniques to estimate the polynomial model of a physical system based on observed explanatory factors and how they relate to the response factor.

One key point is that data science models are applied to new data to make future predictions and descriptions, or "put into production". While it is true that response surface models can be used on new data to predict a response, it is usually a hypothetical prediction about what might happen if the inputs were changed. The engineers then change the inputs and observe the responses that are generated by the physical system in its new state. The response surface model is not put into production. It does not take new input settings by the thousands, over time, in batches or streams, and predict responses.

This data science definition is by no means fool-proof, but putting predictive and descriptive models into production starts to capture the essence of data science.

Communication

Incorrect use of terms also gums up conversations. This can be especially damaging in the early planning phases of a data science project when a cross-functional team assembles to articulate goals and design the end solution. The most common term-confusion I hear is when someone talks about AI solutions, or doing AI, when they really should be talking about building a deep learning or machine learning model. It seems that far too often the interchange of terms is on purpose, with the speaker hoping to get a hype-boost by saying "AI". Let’s dive into each of the definitions and see if we can come to an agreement on taxonomy.